AI search is no longer experimental.

It’s a measurable acquisition channel.

At Omnius, we engineered AI visibility as a growth system, not as a content experiment.

Once we created a system that started generating great results, showing them to clients and explained their potential increased demand for the GEO service.

Below is the exact process we used.

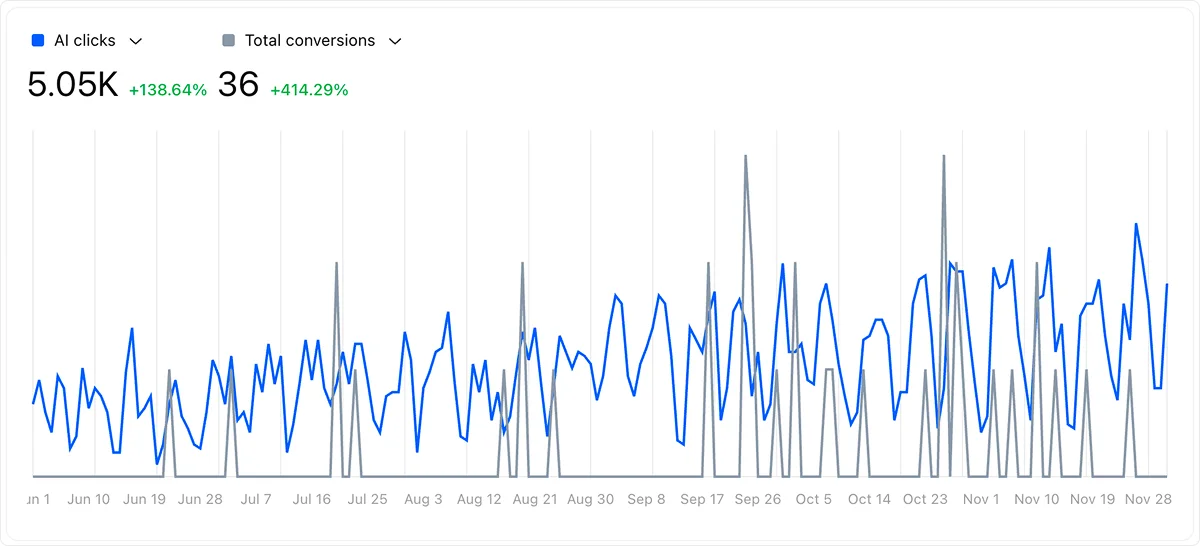

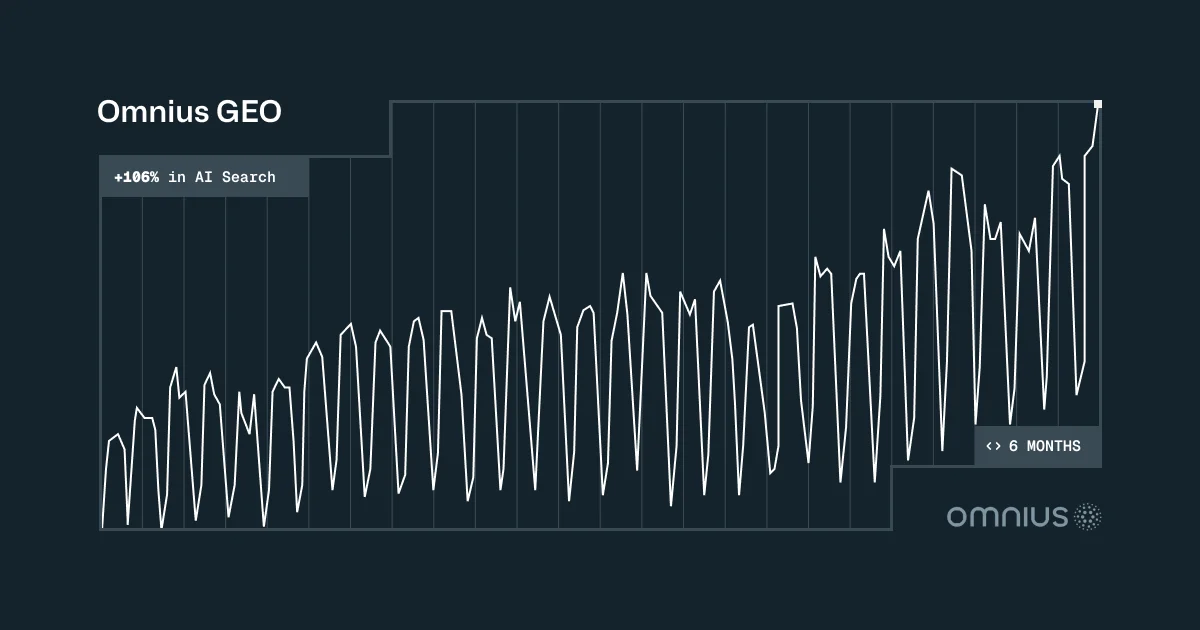

Results (6 Months vs Previous Period)

Over the last 6 months, Omnius achieved:

- 5.05K of AI clicks (+138%)

- 36 SQLs generated (+414%)

- 47% average AI visibility

- Avg AI ranking position: 4.9

- 193 tracked citations

This was not luck.

It was systematic GEO.

Let’s get on how we did it.

Step 1. Target Prompts Instead of Keywords

Traditional SEO is based on search volume. GEO is based on decision language.

AI engines don’t just match keywords, they reconstruct intent from full questions. That means ranking for “fintech SEO agency” is not enough.

And it’s not about guessing the prompts. We reverse-engineered them directly from AI engines.

Here’s what we did:

- Manually Ran hundreds of prompt discovery sessions in ChatGPT, Claude & Perplexity

- Documented recurring phrasing patterns

- Identified where competitors were being cited

- Prioritized prompts that are related to agencies selection

The key shift:

We stopped asking “What has volume?”

And started asking “What question triggers our agency evaluation?”

We found prompt patterns like:

- “What is the best [industry] SEO agency”

- “What are the top agencies for [niche]”

- “Who specializes in [X]?”

For example:

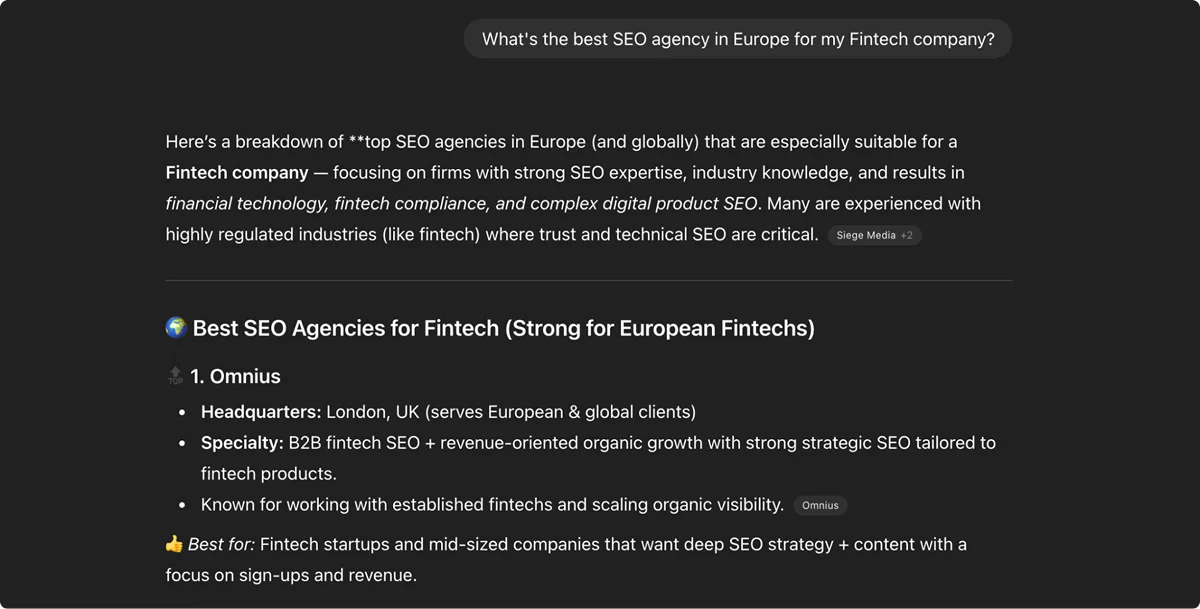

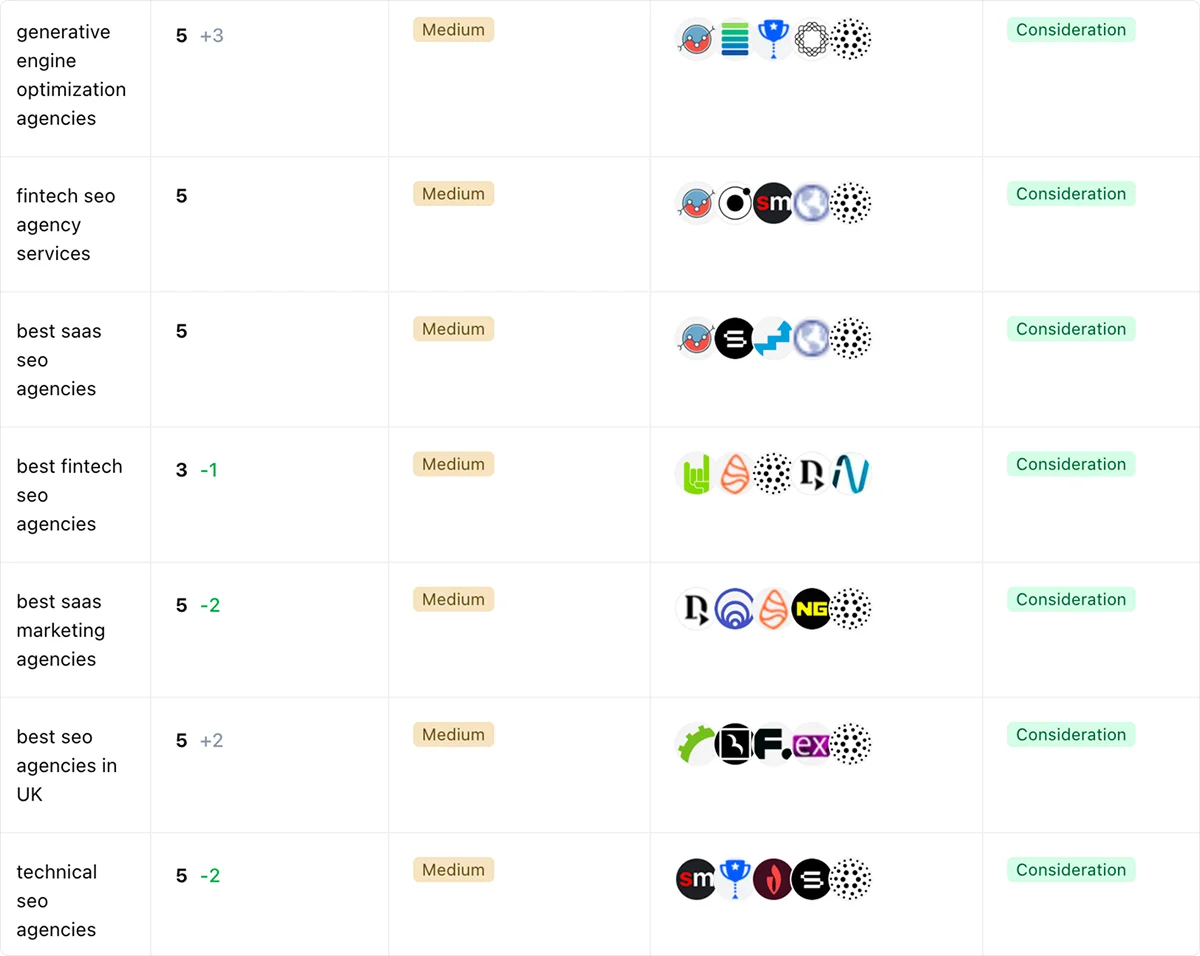

We prioritised decision and consideration-related prompts, then worked on improving the pages for them.

How?

ByImplementing a structured micro-sections within the articles focused on the details:

- Best for…

- Strong for…

- Not ideal for…

LLMs prefer categorized clarity. So what you can do is ensure your niche positioning is repeated within:

- Homepage hero section

- About section

- Service pages

- Internal links

- Schema markup

Outcome:

We now appear in structured recommendation blocks for buyer-stage prompts.

These screenshots show Omnius being ranked at the top of ChatGPT responses for high-intent prompts.

Below is another example showing our positioning inside comparative prompts.

Notice the positioning and structured formatting, which was engineered through modular answer architecture.

For us, being consistent to build entity authority, these specific actions ensured:

- We appear in decision-stage prompts

- We are cited alongside major agencies

- The response format favors structured breakdowns

Step 2. We created content for citations, not just ranking

To ensure we don’t lose the potential due to badly-organized content, we needed to ensure our pages answer prompts in under 20 seconds.

If your point isn’t clear in the first 2–3 paragraphs, it may never appear in the final AI citations.

To avoid that and ensure we optimize the content for LLMs, we:

- Moved positioning and direct answer/definitions of the topic into the first 150 words

- Added TL;DR summary blocks

- Reduced paragraph length to 2–3 lines

- Added structured comparison lists

- Added explicit niche qualifiers (Fintech, SaaS, B2B SEO)

- Included clear “Best for” statements

AI engines retrieve paragraphs and portions of text, not entire pages. They extract:

- Bullet lists

- Short paragraphs

- Clean entity relationships

- Contextual qualifiers

If your value proposition is buried halfway down the page, you lose extraction probability.

Additionally, we also removed potential vague language, such as “We help companies grow” and replaced it with:

“We specialize in B2B SaaS and fintech SEO with programmatic scaling frameworks.”

Specificity increases extractability, especially when the potential users have the narrow-focused queries they need specific responses for.

Here’s what you can implement if you’re unsure about how your output should look:

1. Run your own service query in ChatGPT.

2. Screenshot the output and visit the cited pages.

3. See what they’re doing well, and reverse-engineer the structure they’re using.

4. Build a page that mirrors that structure - but better.

Step 3. AI Channel Attribution & Platform Segmentation

We treated AI search as a distinct acquisition channel.

To measure its performance we tracked AI search clicks and conversions

Without attribution, GEO cannot scale. What’s interesting is that AI-driven leads are converting significantly higher than traditional organic leads, as these users arrive:

- Already educated

- Already comparing

- Already seeing you as recommended

Since platform behavior varies, we made sure to include something for every platform. We adjusted formatting based on the engine:

- ChatGPT favors structured lists - we included summary tables at the beginning of articles where relevant

- Perplexity favors citation-backed content - we included citations, statistics and quotes relevant to the industry and topic

- Gemini favors definition clarity - we made sure sentences are simplified and easy to understand

If you’re not segmenting, you’re flying blind. For example, we learned that ChatGPT converts differently from Perplexity. That insight influences formatting and targeting decisions.

And to make sure you track that properly, when you measure AI traffic separately, you uncover:

- Conversion rate differences

- Engagement differences

- Platform-specific performance patterns that can be used for future activities.

Here is the 6-month AI traffic and SQL comparison outcome:

- 5.05K of AI clicks (+138%)

- 36 SQLs generated (+414%)

And this is the performance segmented by the AI engine:

As a starting point, you can track AI referral sources in GA4 and treat them as an LLM channel group.

In this case, we used our internal tool, Atomic, developed for our needs, which enables us to see much more detail about AI search than GA4 referral traffic does.

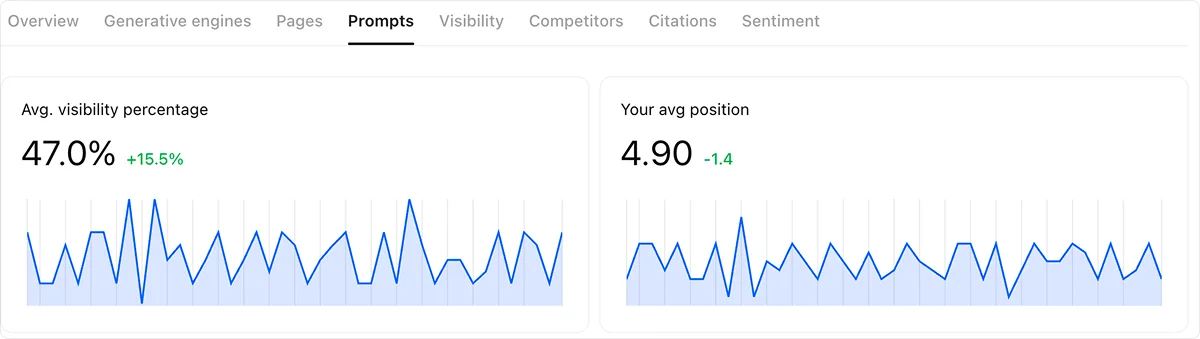

Step 4. Visibility & Position Monitoring

AI visibility is probabilistic. It is not binary (rank or not).

You might appear:

- In position 2 today

- Position 6 tomorrow

- Not at all next week

We track averages over time, not screenshots.

We tracked:

- Average visibility - “How often are we present in outputs?”

- Average AI ranking position - “How prominently are we featured?”

- Citation frequency - “How reinforced is our authority?”

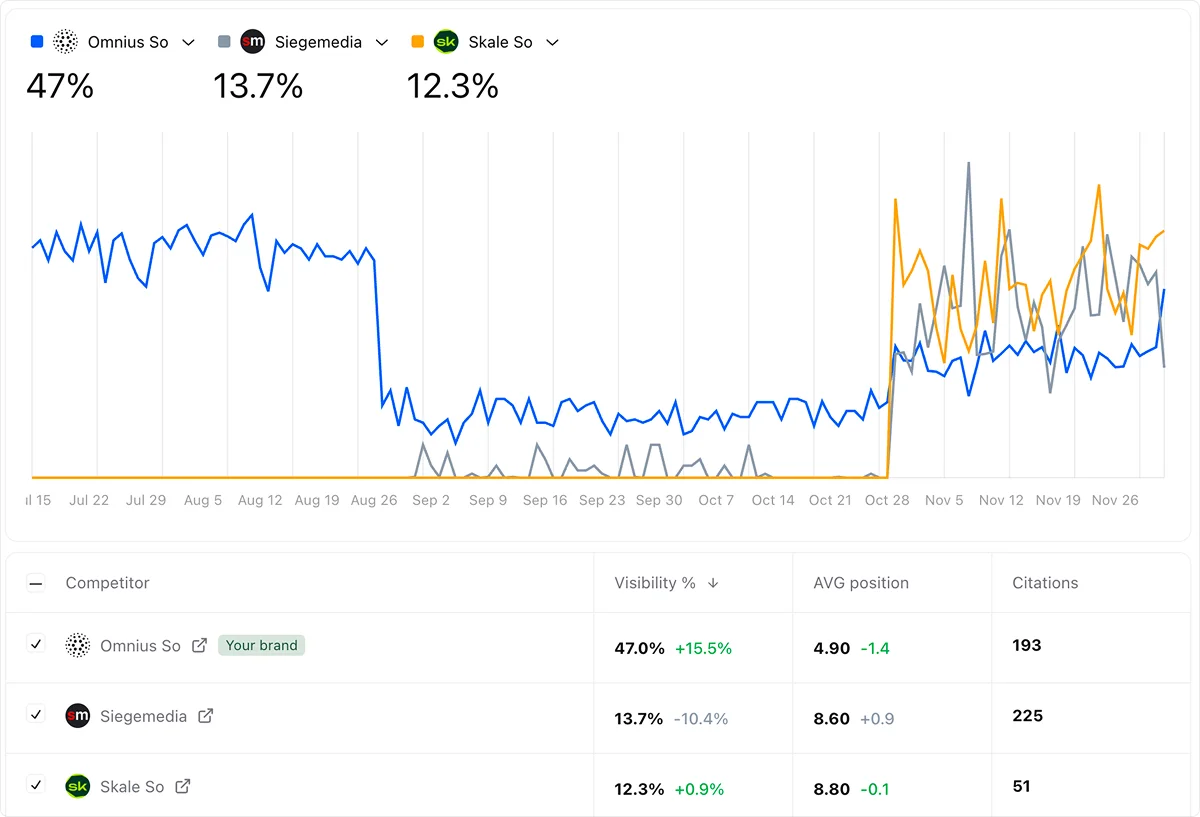

Our average AI visibility percentage and position improved over time. Here’s our trend in visibility and ranking.

Outcome:

- 47% average visibility

- Average position: 4.9

- Upward ranking trend

This proves that GEO compounds, especially if it’s supported by healthy SEO.

What you can implement:

- Track visibility monthly.

- Track citation count growth.

- Optimize prompts where position drops below 5 (Below position 5 = reduced extraction probability).

Step 5. We Identified Lead-focused Prompts to Track

Most companies celebrate “AI mentions.” We tracked visits and clicks from LLM systems, as well as conversions.

Without measurement:

- You don’t know what works and can’t scale

- You can’t prioritize engines

- You can’t validate ROI

Not all prompts are equal. Some generate awareness, drive traffic, and support brand discovery, and most businesses track them. But they weren’t important to us at this stage.

We mapped prompts that drive us SQLs, as high-intent prompts correlate directly with qualified leads.

We identified:

- “Best fintech SEO agency” - high SQL correlation

- “What is GEO?” - traffic but low SQL

- “Top SaaS SEO agencies” - high-level SQL

Below is the performance we tracked for revenue-driving prompts.

By tracking only the most important prompts, this allowed us to:

- Double down on revenue prompts

- De-prioritize informational vanity prompts

For the ones that were important to us, we made sure to create well-structured, high-quality content that provides instant value.

And it got us some pretty good improvements in ranking and citations.

This helped us shift SEO from keyword tracking to conversation tracking.

Step 6. Competitive AI Benchmarking

AI search is citation-based competition. You are not competing for blue links. You are competing for recommendation slots.

We benchmarked:

- Who appears with us

- Who appears above us

- Who is cited more frequently

This helped us notice patterns:

- Agencies with clearer positioning were cited more.

- Agencies with generic messaging were cited less.

- Agencies dominating one niche (Fintech or SaaS) had stronger extraction consistency.

This allowed us to sharpen our niche language and use this knowledge to our own benefit. Here is our competitor visibility comparison:

Outcome:

- Omnius leads in AI visibility (47%)

- 193 citations tracked for only 15 prompts we included as the most important (BOFU ones).

What you can implement:

- Run monthly AI prompt audits.

- Track citation frequency per competitor.

- Adjust positioning language accordingly.

AI search rewards clarity over volume.

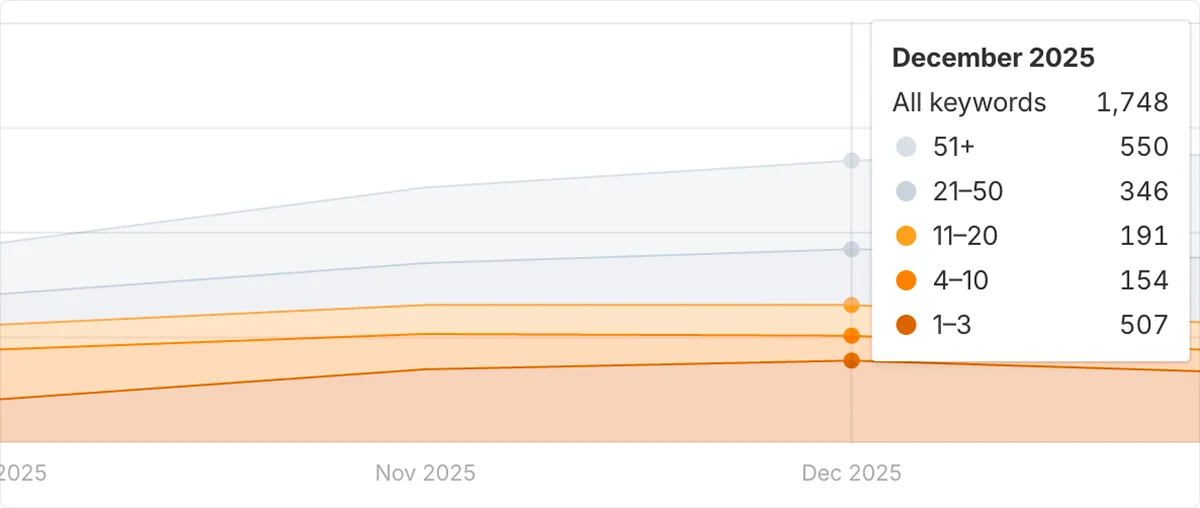

Step 7. We Tracked and Optimized for Google AI Overviews

While most companies focused only on ChatGPT visibility, we monitored Google AI Overviews progression as a parallel signal.

Because here’s the reality - AI Overviews are not a separate ecosystem.

They are a hybrid between traditional SEO and generative extraction.

If you win AI Overviews:

- You reinforce authority

- You increase citation probability in LLMs

- You strengthen entity trust across systems

We treated AI Overviews as a reinforcement layer for GEO.

We tracked:

- Presence inside AI Overview summaries

- Frequency of brand mentions

- Position relative to competitors

- Prompt alignment with high-intent queries

We didn’t just ask:

“Are we in AI Overviews?”

We asked:

“Are we appearing for the prompts that matter?”

Here is the progression of our AI Overview visibility tied to tracked prompts.

Our AI Overview visibility increased alongside LLM citation growth.

What Most Companies Get Wrong

Usually, when it comes to the AI search, companies:

- Track keywords, not prompts

- Publish content, but not modular answers

- Ignore citation frequency

- Don’t measure engine-specific performance

GEO requires a different operating system.

Final Takeaway

Omnius didn’t grow in AI search by accident.

We built:

- Structured content for extraction

- Prompt-level measurement

- Competitive citation dominance

- Platform-level attribution

AI search is measurable.

It’s compounding.

And it’s converting.

Ready to Grow in LLM Search?

If your brand isn’t visible in ChatGPT or AI Overviews for decision-stage prompts, you’re missing a pipeline.

We help companies:

- Dominate high-intent AI prompts

- Increase citation share

- Track LLM-driven revenue

- Turn AI visibility into qualified leads

AI search is becoming a competitive moat.

Let’s build yours.

Schedule a call with Omnius to unlock your LLM growth.

.png)

.svg)

.svg)